PSYCHOLOGY OF SAFETY: The myth of the 'root cause'

I hope you see how silly it is to believe the root cause of something can be discovered by obtaining five successive answers to: "Why did this happen?" But can I convince you that there is really not one root cause to uncover - at least not with the methods typically used for an incident analysis (such as perception surveys and interpersonal conversations)?

More importantly, I want to persuade you that the term "root cause" is actually detrimental to obtaining optimal participation in an incident analysis and to defining the most effective ways to prevent recurrence of the mishap. Let's start with a discussion of cause-and-effect relationships.

What is cause-and-effect?

Real-world events - both desirable and undesirable - have causes. Events, including behaviors, do not happen by themselves or for no reason. In fact, the notion that every event has a specific cause is a basic assumption of science. Researchers apply the scientific method to search for the causes of events or behaviors. But this is easier said than done. Let's consider three criteria, each of which is required to infer causation between a certain factor and an event.Covariation: This is the easiest criterion to establish. It means the presumed causal factor and the event (or behavior) vary together. In other words, the factor must be present when the event (or behavior) occurs.

This criterion for identifying a cause-and-effect relationship can be measured with a survey or by behavioral sampling. Surveys can show correlations between two or more factors, and observations of a behavior within specific surroundings can demonstrate that a behavior and an environmental factor covary - they occur together in time and space.

Time-order relationship: Demonstrating a cause-and-effect relationship, however, requires more than covariation. It is also necessary to determine which variable, factor, or event occurred first. Such a time-order relationship can rarely be obtained from surveys. And a sampling of behavioral and environmental factors does not necessarily indicate which came first. Actually, this criterion can only be satisfied when the presumed causal factor is manipulated (as an independent variable) and its impact is subsequently observed in another factor (the dependent variable).

No other explanation: Covariation and time-order relationships are necessary, but not sufficient for defining a cause-and-effect relationship. One more criterion must be reached. Specifically, there can be no other viable explanation for the observed cause-and-effect relationship. Researchers manipulate an independent variable and look for predicted change in a dependent variable.

But even when researchers observe an expected change in perception, attitude or behavior following the introduction or removal of a particular environmental factor, they do not presume a cause-and-effect relationship. Only when all other possible explanations can be eliminated is a cause-and-effect statement legitimate. For example, the researcher in Figure 1 should not presume the drug caused the observed change in the rat's behavior, because there is an alternative explanation.

Researchers eliminate alternative explanations for a cause-and-effect relationship through experimental design. They might use a control group, for example, or observe the behaviors of the same individuals before and after introducing or removing an independent variable. A research design that eliminates other possible explanations for a cause-and-effect observation is considered "internally valid."

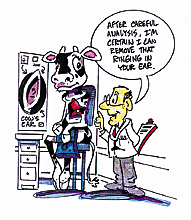

A discussion of various research designs and their associated internal validity is beyond the scope of this discussion. But to help you appreciate the challenge of satisfying this third criterion for causal inference, take a look at Figure 2.

Does this illustration depict a cause-and-effect relationship? In fact, the humor in the drawing is based on a self-evident root cause of the ringing in the cow's ear. The bell causes the ringing, right? But is there another possible explanation? Could other noises have caused the ringing problem? Is it possible the cow was born with the problem, or developed it over time from other noise, independent of the bell around its neck? How can we test whether the bell is the cause?

I'm sure you guessed one answer. Take away the bell and see if the ringing goes away. Might the ringing continue after the bell is removed? Perhaps the bell has caused permanent damage to the cow's ear, and ringing continues without the bell. Bottom line: It is not so easy to show a cause-and-effect relationship.

Skeptical researchers vs. risky safety pros

You will rarely, if ever, hear researchers state a causal relationship between a variable they manipulated and a predicted and observed change in a certain dependent variable. They won't say, "Environmental factor A caused the observed change in behavior or attitude B." The most they would say is something like, "Factor B changed after the manipulated change in Factor A, suggesting a causal link between the two variables."Researchers in the behavioral and social sciences are skeptical and conservative, and rarely claim they found a cause-and-effect relationship. In contrast, safety professionals are quite liberal (or risky) with cause-and-effect language. They look for "root causes" of incidents, and often claim they found them. How? They do this through interviews, surveys, and sometimes a few behavioral observations. These are techniques insufficient at defining causal relationships.

The author of an ISHN article in the March 2002 edition claimed his organization had accomplished research that identified nine root causes of performance excellence.

How did they do this? While the article did not detail methodology, it was evident that the research was essentially based on perception surveys. The researchers obviously did not manipulate the presumed causal factors and observe change in organizational performance. Only the first of the three criteria needed to define causal factors could be achieved by the research purported to define "nine root causes."

Bottom line: Safety pros are too cavalier with the term "root cause." They do not and cannot use the methods needed to find a root cause. As a result, they necessarily make inaccurate or invalid interpretations when looking for cause-and-effect relationships with survey, interview and observational techniques that can only satisfy one of the three criteria required for the identification of causal relationships.

Inhibiting involvement

If safety pros were to assume the skepticism and conservatism of the researcher, they might make more appropriate judgments about the factors potentially contributing to an incident. Plus, they might broaden the spectrum of corrective action.Most importantly, the search for a "root cause" can seem like "fault finding" rather than "fact finding," leading eventually to finding one person presumed to be responsible - the "root cause." Most employees want no part of such an "investigation" or "interrogation." Instead, the objective of an incident analysis should be to define the various factors that could have contributed to an incident, whether it's a near hit, damage to property, or personal injury.

As I discussed earlier in my February 1999 ISHN column, the multiple factors that contribute to an incident can be categorized as environmental, behavioral or personal. And a comprehensive corrective action addresses each of these domains.

You need people to help you identify the variety of possible factors contributing to an incident and explore the diverse array of corrective actions that could prevent another similar incident. Terminology like "possible" and "could" rather than "root cause" is not only more accurate, given the methodology used for an incident analysis, it enables a mindset or paradigm for the kind of frank and open dialogue needed for a comprehensive search of ways to make a workplace safer.

Editor's Note: Following up on Scott's June column, his prostrate surgery went well, he's on the road to full recovery, and in fact Scott attended the American Society of Safety Engineers national meeting in Nashville, giving two presentations.

Links

Looking for a reprint of this article?

From high-res PDFs to custom plaques, order your copy today!